Why Builders Who Talk Through Their Ideas Ship Better

By Forenta Team

Most builders know the feeling. The idea is clear before the keyboard appears. Then the cursor blinks, and something that felt coherent becomes fragmented sentences that do not capture what was meant.

This is not a writing problem. It is a working memory problem, and it is well-documented in cognitive science.

Working memory under expressive load

When you write, two processes compete for the same limited cognitive resource: formulating the idea and encoding it in text.¹ For well-defined thoughts, the competition is invisible. For exploratory or early-stage thinking, it shows up as the sentence you abandoned, the point that was clearer in your head than it looks on screen. Kellogg’s (1996) model of working memory in writing identifies this dual-process bottleneck as a fundamental constraint on written idea generation.

Research on cognitive offloading, the practice of externalizing mental content to reduce working memory load, consistently finds that speaking is a more fluid mechanism for generative thinking than typing (Risko & Gilbert, 2016).² Speech is the primary mode through which humans have processed ideas across most of recorded history (Ong, 1982).³ Writing into a structured field carries an implicit demand for completeness that interferes with the exploratory phase of thinking.

The keyboard was designed for transcription. Voice is what humans use to think out loud.

Concept: Kellogg (1996). A model of working memory in writing.

The brain dump before the build

Experienced builders often describe the same pattern. Before writing anything formal, they talk through the idea. Sometimes to a colleague, sometimes alone. Speaking forces serial articulation that reveals which parts of the idea are solid and which are still vague. Chi et al. (1989) studied this as self-explanation, finding that articulating reasoning out loud consistently improves both idea quality and the identification of gaps.⁴

Voice input changes one thing about this pattern: the rough output no longer needs to be discarded. Instead of thinking out loud and then retyping from scratch, you speak the rough version into the tool and refine from there. The brain dump becomes the starting point, not a throwaway step.

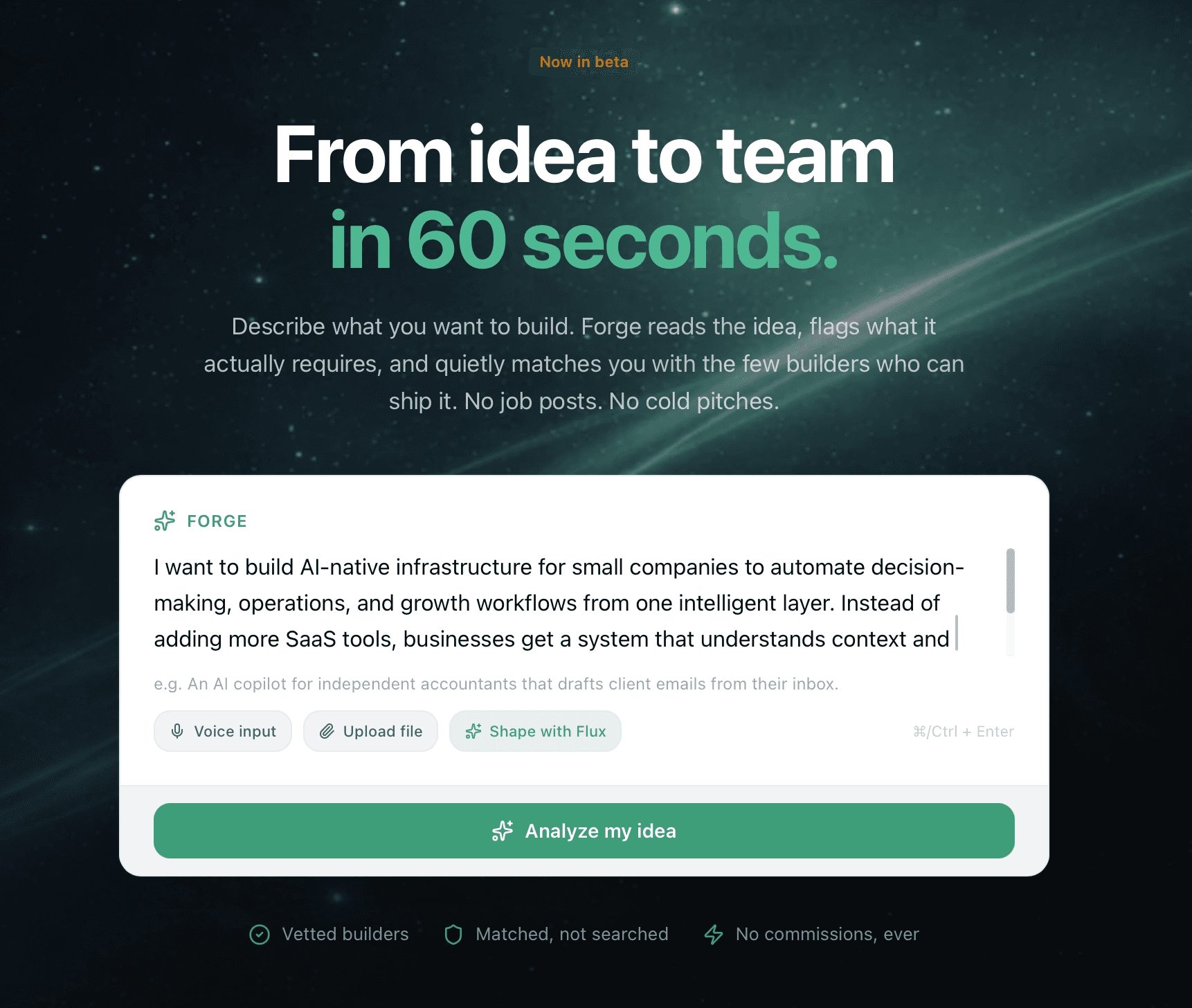

In Forge, the project description field includes a voice input option for exactly this reason. What you say does not need to be structured or polished. It becomes the raw material that gets refined into something the matching system can work with. Speaking through your idea first tends to produce more specific, concrete language than typing under the pressure of a blank field.

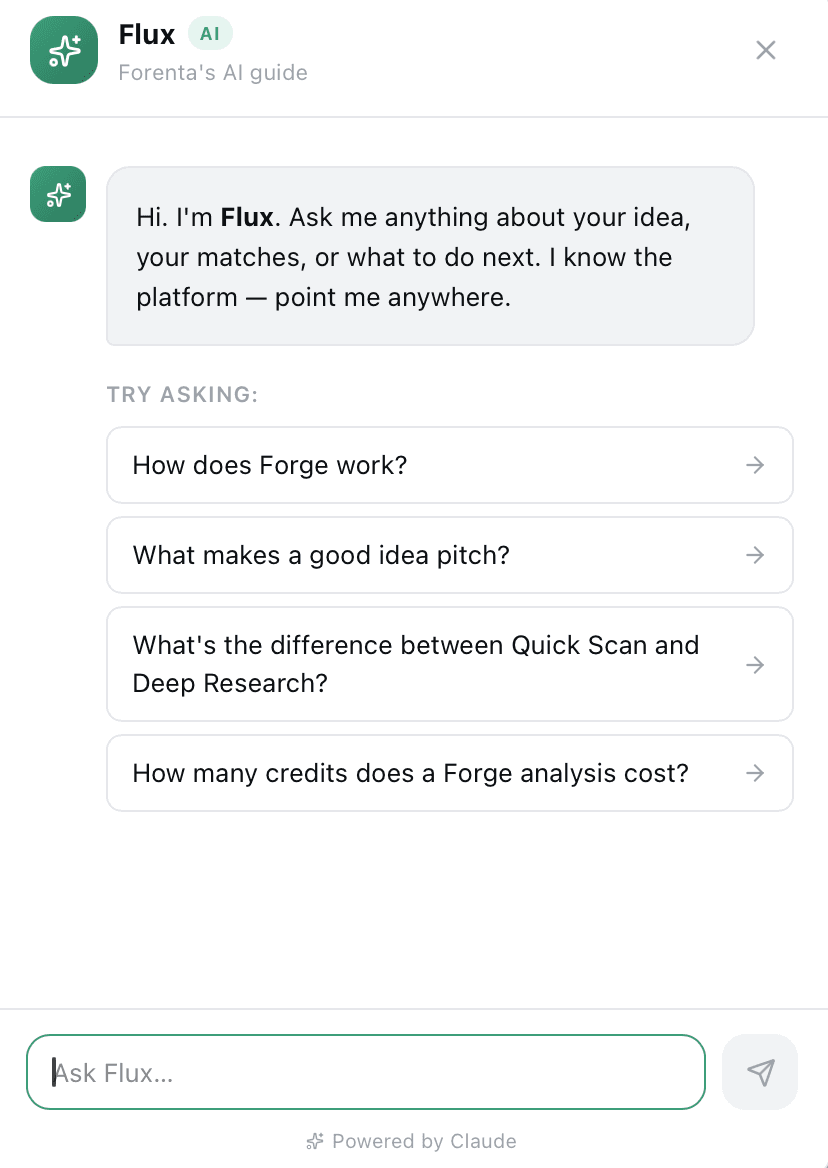

Talking to Flux

Flux, the AI guide built into the platform, handles a different kind of voice use case: not dictation into a field, but dialogue with an AI to help work through what you are trying to say before you commit it to a form. The distinction matters. Dictation captures what you already know how to articulate. Conversation helps you figure out what you are still trying to articulate.

For founders at the early idea stage, the friction of opening the Forge project form is often the first real barrier to using the platform. Talking to Flux sidesteps that by letting you start with what you have, not what you have been able to formalize yet. A conversation that starts rough and clarifies through dialogue tends to produce a project description that is more specific and more honest than one typed from scratch under formal constraint.

From voice to match-ready

Matching on Forenta is sensitive to the quality of the project description. A vague or generic description produces weaker matches than one that reflects what the founder actually needs. Voice input, used as a first draft, tends to generate more specific and authentic material than typing under formal constraint. People speaking without the pressure of a text field tend to use the concrete language of the actual problem rather than the abstracted language of form-filling.

A founder who says ‘we’re building a compliance tool for mid-market SaaS and need someone who has dealt with SOC 2 before’ gets a stronger match signal than one who types ‘looking for a backend developer with compliance experience.’ Voice input tends to surface the specific version.

What voice input does not fix

Voice input has a clear advantage for early-stage idea exploration, project descriptions that require narrative context, and feedback that is primarily about direction or tone. The advantage comes from the lower cognitive cost of speaking relative to typing in a context that implies formality.

It is less useful for precise technical specifications, exact wording that will be reviewed carefully, or structured task lists where accuracy matters from the start. Speaking is good for getting the shape of an idea down. Writing is better for precision and final review. The two complement each other rather than substitute.

The honest limitation is environmental. Voice input requires a reasonably quiet setting and some tolerance for imprecision in the first pass. The AI-assisted refinement is effective for most descriptive content but less reliable for highly technical material with specific terminology. What voice input changes is the starting point. A rough spoken description is almost always a better foundation than a blank field that never gets filled in.

References

- 1.Kellogg, R. T. (1996). A model of working memory in writing. In C. M. Levy & S. Ransdell (Eds.), The science of writing: Theories, methods, individual differences, and applications (pp. 57–71). Lawrence Erlbaum.

- 2.Risko, E. F., & Gilbert, S. J. (2016). Cognitive offloading. Trends in Cognitive Sciences, 20(9), 676–688.

- 3.Ong, W. J. (1982). Orality and literacy: The technologizing of the word. Methuen.

- 4.Chi, M. T. H., Bassok, M., Lewis, M. W., Reimann, P., & Glaser, R. (1989). Self-explanations: How students study and use examples in learning to solve problems. Cognitive Science, 13(2), 145–182.